Deep neural networks are often very powerful in data-driven application domains, but their main drawback is their lack of interpretability. Therefore, it is crucial to investigate the evidence for and provide explanations of the decisions made by deep neural network models in specific application scenarios (e.g., financial analysis, medical diagnosis).

In the field of computer vision, most of the popular model interpretation methods are feature-based, and most of these methods provide instance-based interpretations via saliency maps. However, these feature-based interpretations may not match human understanding, because humans usually understand an image through higher-level concepts. Therefore, the class-wise interpretation provided by concept-based methods is often easier for humans to understand.

There are several challenges in interpreting deep neural network models based on concepts that improve human understanding and confidence in the models.

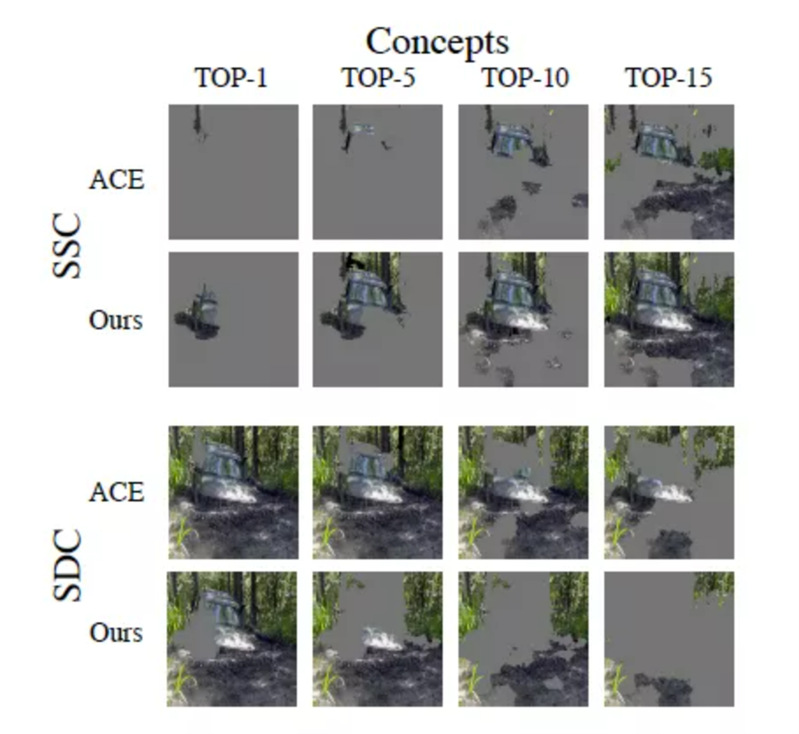

(1) Both class-wise and instance-wise interpretations. Class-wise interpretations analyze the decision boundaries of the model; instance-wise interpretations analyze the meaning of the different concepts in each instance. Both are equally important in improving human understanding of the model.

(2) Interaction between concepts. Concepts are not independent of each other; they may have a physical interaction (interaction with concepts in spatially adjacent regions) or a semantic interaction (interaction with semantically similar concepts).

(3) Concept evaluation method. A good concept should be expressive and play an important role in decision making in the model.

Recently, a group led by Dr Kun Kuang tried to address the above issues and propose a novel Concept-based NEighbor Shapley approach (dubbed as CONE-SHAP) to evaluate the importance of each concept by considering its physical and semantic neighbors, and interpret model knowledge with both instance-wise and class-wise explanations. In detail, their work

(1) provided both instance-based and class-based interpretations of neural network models through concept-based interpretations.

(2) evaluated the importance of concepts based on Shapley Value. COncept-based NEighbor Shapley (CONE-SHAP) method is proposed to compute Shapely Value with low complexity and considers both physical and semantic interactions between concepts.

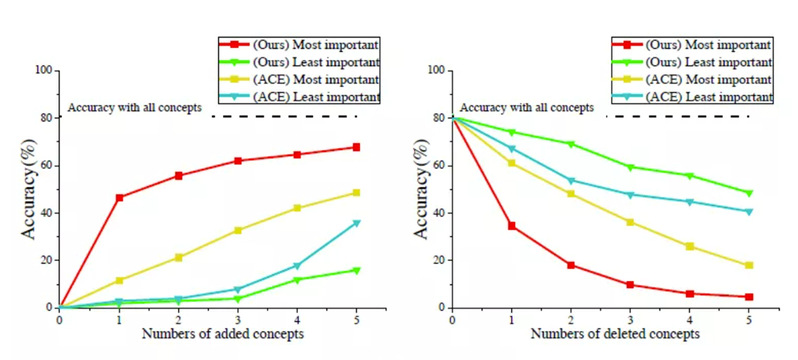

(3) proposed three metrics (coherence, completeness and fidelity) to quantitatively and comprehensively evaluate the quality of concepts.

With this design, the interactions among concepts in the same image are fully considered. Meanwhile, the computational complexity of Shapley Value is reduced from exponential to polynomial. Moreover, for a more comprehensive evaluation, the group further propose three criteria to quantify the rationality of the allocated contributions for the concepts, including coherency, complexity, and faithfulness.

Extensive experiments and ablations have demonstrated that the CONE-SHAP algorithm outperforms existing concept-based methods and simultaneously provides precise explanations for each instance and class.

The work was accepted by ACM MM 2021 and published in Proceedings of the 29th ACM International Conference on Multimedia at Instance-wise or Class-wise? A Tale of Neighbor Shapley for Concept-based Explanation | Proceedings of the 29th ACM International Conference on Multimedia.

About Dr Kuang

Dr Kun Kuang is an Associate Professor of the College of Computer Science and Technology at Zhejiang University. He received his Ph.D. in the Department of Computer Science and Technology at Tsinghua University in 2019, co-advised by Prof. Shiqiang Yang and Prof. Peng Cui. From Sep. 2017 to Sep. 2018, he had visited Prof. Susan Athey's group at Stanford University as a visiting student. He also worked with Prof. Bo Li and Prof. Wenwu Zhu at Tsinghua University.

Dr. Huang's research interests include causal inference, machine learning, and data mining. In particular, he is dedicated to promote the convergence of causal inference and machine learning, with a focus on improving the effectiveness, as well as the stability and interpretability of causal inference with machine learning technologies

About SIAS

Shanghai Institute for Advanced Study of Zhejiang University (SIAS) is a jointly launched new institution of research and development by Shanghai Municipal Government and Zhejiang University in June, 2020. The platform represents an intersection of technology and economic development, serving as a market leading trail blazer to cultivate a novel community for innovation amongst enterprises.

SIAS is seeking top talents working on the frontiers of computational sciences who can envision and actualize a research program that will bring out new solutions to areas include, but not limited to, Artificial Intelligence, Computational Biology, Computational Engineering and Fintech.